Hi, this is Ray.

Confession upfront: I use AI tools. A lot. I bounce ideas off them, I use them to debug code, I use them to brainstorm titles for these very newsletters. I am not here to wag a finger at AI from some pristine luddite mountaintop. I am writing this on a computer and I have a phone in my pocket and I have asked an LLM, more than once, to help me explain a concept I half-understand. I'm not above the trap. I'm in the trap. We're all in the trap.

But (and this is a big but) the research that's come out in the last 18 months on AI and learning is genuinely sobering, and I think a lot of us (me very much included) have been quietly building up something the researchers are now calling "cognitive debt" without realizing it. The same way you can rack up financial debt by spending small amounts repeatedly without checking the balance, you can rack up cognitive debt by outsourcing small bits of thinking, again and again, until one day you sit down to do real intellectual work and realize the muscle isn't there anymore.

Today's newsletter is me sounding the alarm I needed someone to sound for me about a year ago. Not because AI is evil. AI is a tool. But the way most of us are using it, especially for learning, is actively making us worse at the very things we're trying to learn. Let's get into the science.

The MIT Study That Should Concern Everyone

Let's start with the study that's been making the rounds, because it's the most direct evidence we have. Researchers at the MIT Media Lab ran a four-month experiment using EEG to monitor brain activity while participants wrote essays, splitting them into three groups: a Brain-only group (no tools), a Search Engine group, and an LLM group (using ChatGPT).

The findings were stark. According to the researchers, over four months, LLM users consistently underperformed at neural, linguistic, and behavioral levels, with reduced alpha and beta connectivity in their brains indicating under-engagement. Self-reported ownership of essays was lowest in the LLM group and highest in the Brain-only group, and LLM users also struggled to accurately quote their own work. Read that last part again. They couldn't accurately quote essays they had ostensibly written themselves. Because they hadn't really written them. Their brains hadn't engaged deeply enough with the content for it to actually become theirs. The essay went in one ear (or one prompt window) and out the other, with the surface-level imprint of "I did this" but none of the underlying neural traces of "I understood this."

The researchers introduced a phrase for what was happening: cognitive debt. The idea is that every time you let an AI do thinking that you could have done yourself, you skip the cognitive workout that would have built durable understanding. Each individual instance feels harmless… it's just a small task, why bother thinking through it myself when ChatGPT can do it in two seconds? But the cumulative effect is real. Your brain didn't lift the weight. Your brain didn't get stronger. And over months, the gap between "I have access to the answer" and "I actually understand the material" gets wider and wider. Smeagol-coded behavior. The Ring is convenient. The Ring is also slowly hollowing you out.

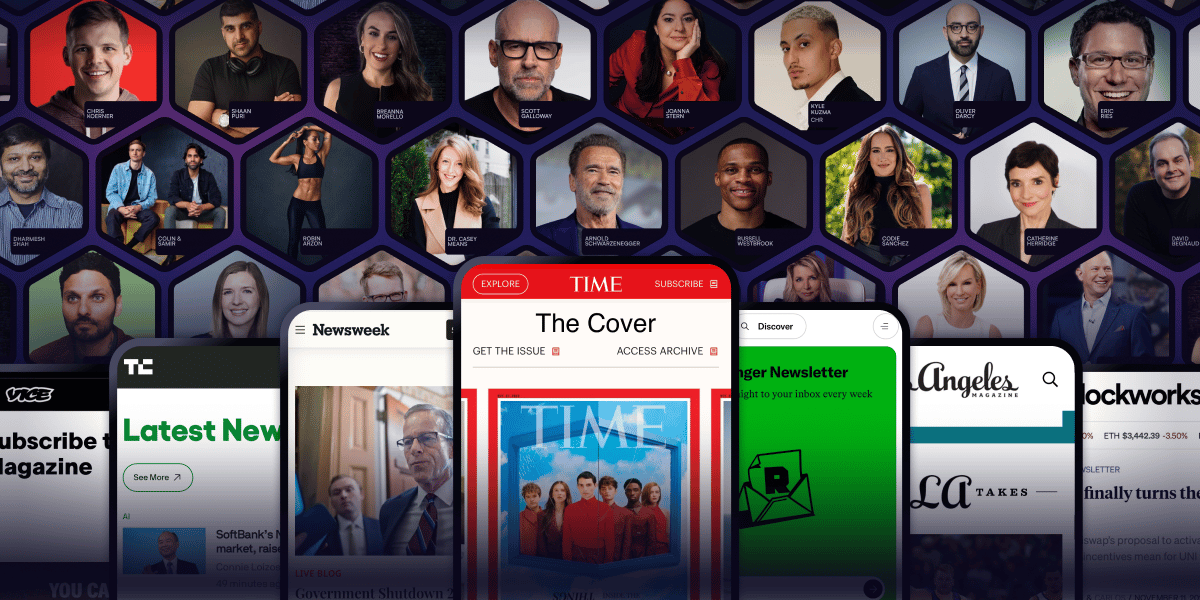

Arnold Schwarzenegger has a newsletter.

Yeah. That Arnold Schwarzenegger.

So do Codie Sanchez, Scott Galloway, Colin & Samir, Shaan Puri, and Jay Shetty. And none of them are doing it for fun. They're doing it because a list you own compounds in ways that social media never will.

beehiiv is where they built it. You can start yours for 30% off your first 3 months with code PLATFORM30. Start building today.

The Randomized Trial That Sealed It

If the MIT study was concerning, the randomized controlled trial published in 2025 in a journal on educational research is even more concerning. The researchers tested whether unrestricted access to ChatGPT during self-directed study helps or hurts long-term knowledge retention.

The result: students who learned using traditional methods outperformed their AI-assisted counterparts on retention tests by a substantial margin, with an effect size of 0.68, directly supporting the hypothesis that AI use during learning impairs long-term retention. For context, an effect size of 0.68 in education research is huge. It's the kind of effect that would normally make a teaching intervention famous. Except here, the "intervention" was using ChatGPT, and the effect went in the opposite direction. The AI-assisted learners got worse retention, by a lot, compared to the traditional learners.

Why? The researchers point to two well-established cognitive principles. First is cognitive offloading: when you let an external tool do your thinking, your brain doesn't do the encoding work that would normally lay down a memory. Second is desirable difficulties: a body of research showing that productive struggle (the kind of effortful processing that feels hard in the moment) is exactly what produces durable learning. Smooth out the struggle, and you smooth out the learning along with it. As the researchers put it, unlike a calculator or a notebook that offloads specific tasks or memory storage, AI can offload entire cognitive processes… including comprehension, synthesis of information, and even aspects of critical thinking. That last bit is the key. We've had cognitive tools before. None of them could outsource comprehension itself. AI can. And when you let it, you skip the very thing that makes learning happen.

The Critical Thinking Casualty

It's not just retention that takes a hit. A 2025 study with 666 participants found that there was a significant negative correlation between frequent AI tool usage and critical thinking abilities, mediated by increased cognitive offloading… and younger participants exhibited higher dependence on AI tools and lower critical thinking scores compared to older participants. The more people used AI, the worse they performed on critical thinking measures. And the effect was strongest in younger users, who have presumably been outsourcing thinking to AI for longer relative to their developmental stage.

A separate large-scale survey of knowledge workers found that frequent users of generative AI report decreased confidence in their critical thinking abilities and reduced cognitive effort in problem-solving tasks. They're not just performing worse… they're aware they're performing worse, and they're putting in less mental effort on tasks that used to require their full attention. That awareness, oddly, isn't enough to break the habit. The convenience overrides the alarm bells. We know we're getting cognitively softer. We do it anyway. Because the friction reduction is just too tempting.

This connects to an older finding about Google. Researchers in the early 2010s described what they called the "Google effect": when people knew they could easily look something up, they remembered WHERE to find the information rather than the information itself. AI is the Google effect on steroids. We're not just remembering where to find facts. We're outsourcing the entire process of working with those facts… analyzing them, connecting them, applying them. The brain regions responsible for that work aren't getting their reps in. The muscle is atrophying.

The Critical Distinction: With vs. For

Now here's where I want to push back against the doom narrative, because the picture isn't black and white. AI isn't categorically bad for learning. The research is actually pretty clear that AI can help learning when used in specific ways… and hurt it when used in others.

A study on generative AI in educational contexts found that AI boosted learning for those who used it to engage in deep conversations and explanations but hampered learning for those who sought direct answers. The key variable wasn't whether you used AI. It was HOW you used it. Asking AI to be a tutor… someone you discuss ideas with, who helps you work through problems, who pushes back when your reasoning is sloppy… seems to support learning. Asking AI to be an answer machine (someone you copy outputs from without engaging) actively damages learning.

The Harvard professor I'd cite on this puts it well. There's no such thing as "AI is good for learning" or "AI is bad for learning." If a student uses AI to do the work for them, rather than to do the work with them, there's not going to be much learning, because no learning occurs unless the brain is actively engaged in making meaning of what you're trying to learn. With AI, not for AI. That's the whole game. Three-letter difference. Massive cognitive difference.

The researchers who studied this in detail found that students fell into two patterns. The "passive AI-directed" pattern: ask a question, accept the answer, move on. The "collaborative AI-supported" pattern: ask a question, engage with the response, ask follow-ups, push back, synthesize across multiple turns. The first pattern produced almost no critical thinking growth. The second one did. The tool was the same. The relationship to the tool was completely different.

What I'm Actually Doing About This (and What You Might Try)

Okay so I've doomscrolled you through enough research. Here's the practical part. These are the rules I've been trying to follow with my own AI use, with mixed success because, again, I am not immune to the trap.

Rule 1: Try first. Always try first. Before asking AI anything, give yourself at least 5 minutes of trying to figure it out yourself. This is the most important rule. The cognitive workout is in the trying. If I let myself reach for AI the second I feel friction, I've already skipped the part where my brain would have built understanding. The struggle IS the learning. The struggle is exactly what AI removes if I let it.

Rule 2: Use AI as a tutor, not a vending machine. When I do bring AI in, I try to use it Socratically. "Help me understand WHY this works" rather than "give me the answer." "Push back on my reasoning here" rather than "write this for me." "Explain this like I'm a beginner, then quiz me on it." The relationship matters more than the tool.

Rule 3: Make AI explain its work. Then make yourself explain it back. If I ask an AI to help with something, I make a habit of trying to re-derive the answer myself afterward, in my own words, without looking. If I can't, I haven't learned it. I've just witnessed it. There's a huge difference. (Going back to the active recall newsletter from a few weeks ago: this is just active recall applied to AI interactions.)

Rule 4: Never copy-paste anything you want to remember. If a piece of writing, code, or analysis matters, retype it. Yes, retype the whole thing. Awkward. Slow. Actually engages your brain in the material. The MIT study showed that LLM users couldn't remember their own essays. Why? Because they didn't write them in any meaningful sense. The act of producing something is part of how you come to understand it.

Rule 5: Have AI-free days. I try to have at least one day a week where I do my work without AI. It's like fasting for your cognitive system. It reminds you what your unaided brain can actually do. It's also genuinely uncomfortable, which tells you something about how much you've been leaning on the tool.

Rule 6: When learning something genuinely new, AI is the LAST resort, not the first. Read the source material. Take real notes. Try to explain it to yourself. Make flashcards. Do the slow, painful, struggle-y version. Only after you've actually engaged with the material on your own should AI come in to fill specific gaps. Reversing this order (AI first, then "studying" the AI's output) is the cognitive equivalent of eating dessert before dinner and wondering why you have no appetite for the actual food.

The Bigger Question

Here's what I keep coming back to. We are running an unprecedented experiment on human cognition right now. For the first time in history, humans have ubiquitous access to a tool that can perform high-level cognitive labor on demand. Nobody knows what 10 years of habituation to this looks like. The early data, as I've covered above, isn't encouraging. The MIT study, the RCT on retention, the studies on critical thinking erosion… these are signals we should take seriously.

But the deeper issue isn't just performance metrics. The deeper issue is what kind of mind we want to have. The reason I learn things isn't to have access to information… I have infinite information already. The reason I learn things is so that the information becomes part of how I think, see, connect, and create. That last step requires the brain to do the work. AI can show me the answer. AI cannot make me into someone who understands. Only the work makes me into that person. Skip the work, skip the becoming.

So the question isn't "should I use AI." Of course you'll use AI. I use AI. The question is whether you're using it to extend your thinking or to replace it. Whether you're walking out of each interaction stronger or weaker. Whether you're building cognitive equity or accumulating cognitive debt.

The convenience is real. The cost is also real. Pay attention to both. The tool is not your enemy. But it is also not your friend. It is a tool. Use it like one. Keep your brain in the loop. Or eventually, the loop will go on without you.

Keep learning (and keep doing the actual thinking),

Ray